|

| The first Heraean Games began as an annual foot race of young women in competition for the position of the priestess for the goddess, Hera. |

When my friends I were discussing possible medical schools to apply to, one of my friends explained how she chose not to apply to the Pritzker School of Medicine at the University of Chicago after she heard stories of the “competitive” nature of the students. Speaking as a student who loves excitement and challenge of my courses, no matter how I could obtain the experience, I would definitely enjoy a great amount of competitiveness among my own peers, even in the setting of grinding doctor-hopefuls. Bear in mind that competitiveness is not the same as rigorous, and, given our dissenting beliefs about the situation, it is not immediately self-evident how competitiveness should affect us anyway. This is important because, among pre-medical students, we’ve become so tunnel-visioned and focused on the goal of entering a medical school that “competitive applicant” has become synonymous with “good applicant.” This diction implies that a competitiveness is inherent to all good medical school applicants because we know we must compete against other amazing students. Intrigued, I wondered whether or not there was a healthy amount of competition that would produce the best doctors.

I’ve always loved healthy competition. But, of course, I’ve seen the good, the bad, and the ugly. I’ve studied with friends who always try to one-up my responses to scientific questions in order to find the right answers. I’ve witnessed the faces of jealousy and bitterness on my friends faces when I mention my summer internship at a big-name college. And I’ve met students who cower in fear at a contrary opinion or an unfamiliar idea.

As I’ve already mentioned, everyone has different points of view on competitiveness. David Papineau, Professor of Philosophy at King’s College London and the City University of New York, remarked that women are fewer in number than men in philosophy because “Where many men will relish the competitive challenge and enjoy the game for its own sake, many women will see it as the intellectual equivalent of putting balls in pockets with pointed sticks, and conclude that they could be doing something better with their lives.” Indeed, certain fields, such as philosophy or economics, are much more driven by competition than other fields, and these honorable tests of combat and rigor In addition, competition distinguishes itself from other forms of rigor in that there exists a social component to it. In light of this, it may seem like competition truly is as trivial as a joust between knights or a foot race among Greeks. If one believes competition is just “playing a game” against others, then he/she might not be inclined to seek competition. Not only does this allow us to manufacture competitiveness through economic, social, biological, and scientific behavior, but we may also observe competition as a phenomena in and of itself.

|

| Parzival’s tale of jousting and chivalry may exemplify the game of competition that we encounter today. Is education the same way? |

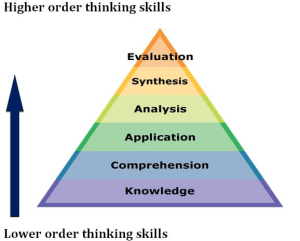

Competitiveness is unavoidably everywhere in academia & education. We compete with each other for positions in graduate programs, different research labs work on the same problems through respective approaches, and, whether we like it or not, our course grades are usually at least somewhat determined by how well we do with respect to other students in the class. And, for pre-medical students, we have a unique kind of competitiveness among each other. More generally, through research, discourse, and teaching, knowledge (at least, in academia) itself is a competition among ideas. When we test the conductivity of nanomaterials or inquire about Schopenhauer’s view on Kant, we are inviting our presupposed beliefs to be contested against those of others. The same way that natural species that are most apt to survive among challenges in a biological environment, any flaw or uncertainty should and must be removed from our foundation of knowledge.

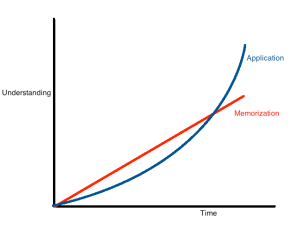

Education is hard. Is competition the only source of this difficulty? If so, could we attain identical or similar rigor necessary to achieve our goals without the harms of competition? Imagine a world in which students would sit through exams and classes to receive grades, and anyone who could score above a certain percentile, with a fixed number of volunteer hours, and an adequate score on some external scale would be admitted to medical school or receive an appropriate GPA. By this, I mean that, without competition, students could not have any judgement or obtain any source of improvement that comes from his/her relation to his/her peers. We would need to construct an objective, external framework to provide feedback and criteria on a student’s performance. Certainly, education would still be very difficult, but, apart from the measure of a student’s performance on this scale, a student would only face an “internal” struggle to find motivations to improve him/herself from within rather than the long-lasting dream to be the best epistemologist or heart surgeon in the world. From competition to enter medical school, we’ve seen cheating and desperation. It seems almost unavoidable that, if you are forced to cheat to secure the best grade possible, that you must ask your friends for disclosed information about exams and essays. But among philanthropic initiatives, we’ve seen greater results. Adam Smith may provide a framework for finding a harmony among competing individuals, “led by an invisible hand to promote an end which was no part of his intention,” but a the harmful “two-sidedness” of competition is socially wasteful between individuals in a biological environment becomes socially wasteful (as there is no biological “social contract” that we find in economic theory”. This Pareto-inefficient biological competition only serves to increase the difficulty for other individuals without adding value in and of itself. In light of these studies from different fields, is it possible for us to obtain an objective framework of competitiveness for education? Even with our selfishness, destruction, hatred, jealousy, and violence that stem from competition, those like Gandhi may secede that competition is the aggressor of human behavior.

I believe we can embrace an ethical approach to a competitive education. Competition in education encourages social ties among peers who share the same goals. There may be downsides such as anxiety and desperation that promote sly behavior out of self-interest, but if we use competition among students to promote constructive criticism, honest feedback, and desires to improve oneself while remaining avoiding the corrupt consequences of competition, we may be okay. An education system without competition would rely too heavily on an “external” objective framework that is difficult to create and inefficient in promoting the qualities a student needs. Competition among students draws out the worst in us, but it is our responsibility to control our behavior regardless of how the student next to you behaves.

But if my struggle to improve and get work done is about as intellectual as a sport between combatants, then I should just be happy I’m enjoying it.