Pondering difficult questions of her own cultural background, German author Nora Krug asks the questions of what belonging is and what that means to her. To belong to a culture of Germans responsible for the unspeakable atrocities of World War II meant Krug was challenging the very idea that she should belong to that culture. Though she was born several dozen years after the fall of the Nazis, the actions would cast a shadow on her life. Searching for answers, Krug’s graphic memoir wrestles with home and her self.

For a German civilian to recognize and understand the actions of Nazi Germany would shake anyone to the bone. Like a scientist studying her own brain through fMRI or a philosopher accounting for his personal story with depression, Krug both detaches herself from who she is while becoming intimately close to it. It’s a delicate balance between self-criticism and appreciation for the value it is that makes Krug’s story tricky and challenging. Krug fortunately approaches these issues and limitations by capturing the images of Nazi Germany memories and stories with an empathetic brushstroke. By invoking symbolic images and a subdued art style, Krug invites the reader to join her in asking intense questions that represented history. “Are Jews evil?” “What is my home?” “Where do I belong?” “Who am I?” These questions accompany stylized pictures of people that appear both fundamentally flawed in their thinking and terrifyingly real. I find myself shocked, yet soothed that my reactions and perceptions are okay to experience.

To manage and interpret these feelings of guilt and shame mixed with a pride that any ordinary individual would hope to have for themselves, Krug’s interviews and anecdotes account for the abhorrently evil actions that shaped the past. To be a German is to understand the notion of Heimat, or the German word for the place that forms us. As humans, this responsibility to society and humanity in general means they must account for their decisions. For Germans, this means a humble, gentle remembrance of what mankind is capable of and determining what that means for the future. Other aspects of the memoir, such as the tender pacing between panels and scenes allow the reader to become truly close to Krug’s thoughts. The shock and sorrow the reader experiences parallel the shared responsibility Germans have for recollecting and understanding the meaning of their past. Contrasting the realistic photographs with comical, nearly bizarre, human faces, Krug almost invokes a dark sense of humor. This would be humor that one may realize their own dark history to fully move on and recover as a nation.

Does war ever leave a country? Or does it plague mankind forever? A German may worry that sort of patriotism might be a reminiscent eulogy of the days of Nazi Germany. Despite the end of the war and the dismantling of Nazi Germany, the humans of today continue to struggle to understand their purpose and meaning in life. One might even argue that the journey of looking for meaning is much more important the destination itself. Similar to the Myth of Sisyphus, we imagine ourselves content in grappling with questions of existence despite never having completely satisfying answers. Nora sets out to really find the truth about what her family did in what seems like a way to absolve her of her guilt. There’s no deux ex machina or dramatic catharsis of guilt and tragedy. Krug only wants answers. She wants to know what happened even if it does’nt make her feel better. For her to put this paramount truth above all else gives her a much more objective and sublime look at her own past. I hope the reader can pick up the book and wonder what their past means for them.

Author: S. Hussain Ather

-

How war shapes a country: a review of Nora Krug’s "Belonging"

-

Memories of Claude Shannon, father of information theory

“My greatest concern was what to call it. I thought of calling it ‘information,’ but the word was overly used, so I decided to call it ‘uncertainty.’ When I discussed it with John von Neumann, he had a better idea. Von Neumann told me, ‘You should call it entropy, for two reasons. In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. In the second place, and more important, no one really knows what entropy really is, so in a debate you will always have the advantage.’” – Claude Shannon, Scientific American

Lest we take information for granted, we find ourselves forsaking the groundbreaking work of mathematician Claude Shannon. The data and functions of computers would not have been possible had Shannon made great strides in the 1930’s and 1940’s about the nature of information. With his pivotal discoveries at a young age, Shannon’s work at Bell labs and MIT changed the ways computers operated forever. Shannon’s discoveries paved the way for information is quantified, stored, managed, and used in any sort of operation on an entirely different level.

Before scientists could approach information using their own techniques, scientists needed to establish guidelines on what matter, energy, and measurements were. And, before Shannon took the stage, the scientists which paved the way for information theory to take root borrowed ideas from chemistry and statistical mechanics. Mathematical physicist James Clerk Maxwell demonstrated methods of managing the disorder of gas molecules in the 1870’s. By this, he taught how to analyze the speeds of the molecules, categorize them by temperature, and even put forward thought experiments such as “Maxwell’s demon.” This research was followed up by Ludwig Boltzmann’s work in establishing the physics foundation of thermodynamics in the 1890’s. Even later, in 1929, physicist Leo Szilard created a potential solution to Maxwell’s demon, and developed the mathematical form for the amount of entropy produced by a bit-wise measurements of gases. Using the words of “entropy” and “memory” in talking about these systems, their potential for allowing computers to store and analyze large amounts of information was less of a convenient metaphor and more of a direct application of these scientific discoveries.

In the 30’s, the 21-year-old scientist demonstrated binary circuits performing actions based on logic. By constructing these logic circuits, computers could perform operations, be them simple or complex. His monumental piece “A Mathematical Theory of Communication” would later be described as the “Magna Carta of the Information Age.” It changed the fundamental beliefs scientists had about information itself and allowed for a far greater effectiveness in communicating information. Accuracay, precision, cost, and function greatly improved through the information a scientist could send and receive. The paper was the first to describe the “bit” a unit of measurement of information carried through different media. A bit is a choice. On or off. Yes or no. One or zero. Shannon saw that these pairs are all the same.

On top of this, Shannon’s work featured values that were common and anticipated to the fields of mathematics and physics. His solutions were elegant, simple, and, by those factors, beautiful. Removing all that is unnecessary and superfluous and delivering the essential features of information theory, Shannon’s paper become a giant upon whose shoulders future researchers would stand. Soon, students interested in mechanical engineering and mathematics found scientists starting multi-million dollar companies and putting forward incredibly practical applications of their disciplines. Titans like Steve Jobs and Bill Gates would arise in the Information Age, and the general public’s access to computers, televisions, and phones would change the landscape of science and engineering forever. Shannon continued to emphasize that the scientific notion of information is void of meaning itself. Instead, chaotic systems, and strings of random numbers, altogether meaningless, are dense with information.

For the rest of his life, Shannon kept a lighthearted demeanor. Taking interests in juggling, poetry, and unicycling, he turned down fame and, instead, chose to remain an earth-shattering researcher in the world of information sciences.

Finally, near the end of his life, the poet-mathematician wrote a letter to Scientific American:

Dear Dennis:

You probably think I have been fritterin’, I say fritterin’, away my time while my juggling paper is languishing on the shelf. This is only half true. I have come to two conclusions recently:

1) I am a better poet than scientist. 2) Scientific American should have a poetry column.

You may disagree with both of these, but I enclose “A Rubric on Rubik Cubics” for you.

Sincerely,

Claude E. Shannon

P.S. I am still working on the juggling paper.

-

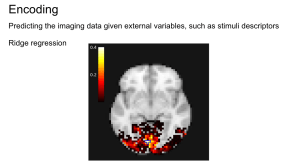

Encoding and decoding with stochastic neuron models

The imaginary component of a sample Fourier transform In the spring of 2018, I worked on an encoding and decoding project for a course about machine learning in Python. I studied the ways neuroscientific data can be analyzed to form predictions of how humans perceive the world. For this set of data, we rely on voxels (the unit of array elements in neuroimaging) and can measure various characteristics from them such as intensity, size, and shape. With these sorts of issues, supervised learning can be used to decode images to relate brain images to behavioral or clinical observations. The predictions made by these supervised forms of learning can then be cross-validated to assess how those results from these individual experiments can be generalized to broader patterns in neuroscience data. We’ll be using a Python module called

nilearnfor this analysis.In this post, I’d like to explain how to apply different statistical methods to real data in the field of neuroscience. Biophysical models can be made to estimate empirical evidence brought by the biology and neuroscience, and using descriptions of neurons (complete with dendrites, synapses, and axons). These mathematical frameworks are common throughout various problems in genetics and embryology, but I’d like to focus on their role in neuron models. Through various methods such as sodium and potassium ion channels (which activate through spiking at threshold potentials), we can uncover predictive models based on the causality of this data.

We can test the performance of these, and other, models by using them as predictive models of encoding. Given a stimulus, will the model be able to predict the neuronal response? Will it be able to predict spike times (the potential received by a neuron) observed in real neurons when driven by the same stimulus – or only the mean firing rate or PSTH (Peristimulus time histogram)? Will the model be able to account for the variability observed in neuronal data across repetitions?

A sequence, or “train”, of spikes may contain information based on different coding schemes. In motor neurons, for example, the strength at which an innervated muscle is contracted depends solely on the “firing rate”, the average number of spikes per unit time (a “rate code”). At the other end, a complex ‘temporal code’ is based on the precise timing of single spikes. They may be locked to an external stimulus such as in the visual and auditory system or be generated intrinsically by the neural circuitry.

Testing the performance of models addresses yet a bigger question. What information is discarded in the neural code? What features of the stimulus are most important? If we understand the neural code, will we be able to reconstruct the image that the eye is actually seeing at any given moment from spike trains observed in the brain? The problem of decoding neuronal activity is central both for our basic understanding of neural information processing and for engineering ‘neural prosthetic’ devices that can interact with the brain directly. Given a spike train observed in the brain, can we read out intentions, thoughts, or movement plans? Can we use the data to control a prosthetic device?

While neural codes are characterized in terms of these encoding views (i.e., how the neurons map the stimulus onto the features of spike responses), these are often investigated and validated using decoding. From the decoding viewpoint, rate coding is operationally defined by counting the number of spikes over a period of time, without taking into account any correlation structure among spikes. Any scheme based on such an operation is equivalent to decoding under the stationary Poisson assumption, because the number of spikes over a period of time, or the sample mean of interspike intervals (ISIs), is a sufficient statistic for the rate parameter of a homogeneous Poisson process.

Since neuronal response is stochastic, a one-to-one mapping of stimuli into neural responses does not exist, causing a mismatch between the two viewpoints of neural coding. For a model to be stochastic, it must operate like a function randomly determined. We can create generalized linear models (GLMs) that have been used for mathematical analysis of neuronal data which have been shown to correspond with models used specifically in neuroscience. These similarities occur when the spiking history term contains only the last spike and a log-link function is used (e.g., soft-threshold integrate-and-fire models (Paninski et al., 2008)).

Due to its complex and indirect acquisition process, neuroimaging data often have a low signal-to-noise ratio. They contain trends and artifacts that must be removed to ensure maximum machine learning algorithms efficiency. Signal cleaning includes: detrending removes a linear trend over the time series of each voxel. This is a useful step when studying fMRI data, as the voxel intensity itself has no meaning and we want to study its variation and correlation with other voxels. Detrending can be done thanks to SciPy (scipy.signal.detrend).

Normalization consists in setting the time series variance to 1. This harmonization is necessary as some machine learning algorithms are sensible to different value ranges. Frequency filtering consists in removing high or low frequency signals. Low-frequency signals in fMRI data are caused by physiological mechanisms or scanner drifts. Filtering can be done thanks to a Fourier transform (scipy.fftpack.fft) or a Butterworth filter (scipy.signal.butter).

In this project, I reconstructed visual images by combining local image bases of multiple scales, whose contrasts were independently decoded from fMRI activity by automatically selecting relevant voxels and exploiting their correlated patterns. Binary-contrast, 10 × 10-patch images (2^100 possible states) were accurately reconstructed without any image prior on a single trial or volume basis by measuring brain activity only for several hundred random images. Reconstruction was also used to identify the presented image among millions of candidates. The results suggest that our approach provides an effective means to read out complex perceptual states from brain activity while discovering information representation in multivoxel patterns.

Neuroimaging data often come as Nifti files, 4-dimensional data (3D scans with time series at each location or voxel) along with a transformation matrix (called affine) used to compute voxel locations from array indices to world coordinates. The tools we use convert the images from 4-dimensional images to 2-dimensional arrays. Masking Neuroimaging data are represented in 4 dimensions: 3 spatial dimensions, and one dimension to index time or trials. Scikit-learn algorithms, on the other hand, only accept 2-dimensional samples × features matrices. Depending on the setting, voxels and time series can be considered as features or samples. For example, in spatial independent component analysis (ICA), voxels are samples. The reduction process from 4D-images to feature vectors comes with the loss of spatial structure. It however allows to discard uninformative voxels, such as the ones outside of the brain. Such voxels that only carry noise and scanner artifacts would reduce SNR and affect the quality of the estimation. The selected voxels form a brain mask. Such a mask is often given along with the datasets or can be computed with software tools such as FSL or SPM.

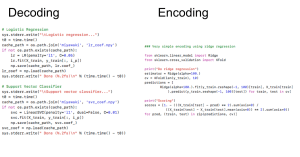

In the experiment of Miyawaki et al. (2008) several series of 10×10 binary images are presented to two subjects while activity on the visual cortex is recorded. The visual reconstruction steps are shown to the left. In the original paper, the training set is composed of random images (where black and white pixels are balanced) while the testing set is composed of structured images containing geometric shapes (square, cross…) and letters. Here, for the sake of simplicity, we consider only the training set and use cross-validation to obtain scores on unseen data. In the following example, we study the relation between stimuli pixels and brain voxels in both directions: the reconstruction of the visual stimuli from fMRI, which is a decoding task, and the prediction of fMRI data from descriptors of the visual stimuli, which is an encoding task.

For encoding, reconstruction accuracy depends on pixel position in the stimulus—note that the pixels and voxels highlighted are the same in both decoding and encoding figures and that encoding and decoding roughly match as both approach highlight a relationship between the same pixel and voxels.The code for decoding and encoding using sci-kit learn is shown below.Sources

Abraham et al. (2014). “Machine learning for neuroimaging with scikit-learn” Frontiers in Neuroinformatics.

Miyawaki et. al. (2004). “Visual Image Reconstruction from Human Brain Activity using a Combination of Multiscale Local Image Decoders” Neuron.

Paninski, L., Brown, E. N., Iyengar, S., & Kass, R. E. (2008). Statistical analysis of neuronal data via integrate-and-fire models. Chapter in Stochastic Methods in Neuroscience, eds. Laing, C. & Lord, G. Oxford: Oxford University Press.

"What’s so great about classical music?" A personal and philosophical perspective

“The aim and final end of all music should be none other than the glory of God and the refreshment of the soul.” – Bach Arguably moreso than other forms of music, classical can enrich and and stir the soul in ways that require incredibly lengths of comprehension and description to truly appreciate. To put in words what the body and mind experience when listening to a classical composition would be to draw lines between what is and what isn’t music in these complex theoretical forms that we give life to. Looking at definitions of aesthetics, pleasure, symmetry, and other elements that go into all forms of music, we can create an idea of what classical music is and why it’s so important to many of us. I write this as I listen to Ravel’s “Pavane for a Dead Princess”, a piece I had the honor of playing in my high school orchestra class. I see various YouTube videos promising that their playlists of classical music are beneficial to brain power. I’m incredibly dubious of those claims, but I believe we can find a sort of intellectual enlightenment with a philosophical appreciation or reflection of the music itself.

In making sense of these auditory forms of art, we need clear guidelines such that we can interpret musical sounds as representations of objects only where it is appropriate and justified to do so. Our human ability to interpret and assign meaning to music and what that means for humans and other forms of art must be investigated to understand the way our aesthetic ability works. It appears that music has the capacity to engage our aesthetic sensibility without also engaging the cognition of objects. This sensibility is linked in complex ways to inner experience, feelings, moods, and emotions.

Generally in Western tradition, we can turn to Plato for a very early account of what the philosophy of music truly is and what it entails. But the music and its role in society has existed long before Plato, and, to dig down to the earliest discussions of music, we’d need to understand the nature of that music itself. Still, Plato’s account of music provides a relatively suitable starting point given all the information in his works the Republic and Laws.

During Plato’s time, the discussion of the special power of music to shape our inner life predates Plato, as evidenced by the lively debates of the pre-Socratics on this topic. Plato believed that music as an art that can bypass reason and penetrate into our innermost self, impacting the constitution of our character. Music functions in a way like a “charm” on our inner life, shaping this life to its pattern. Classical music in particular stands out among musical cultures for its ability to evoke compelling inner experiences in the listener. Plato believed music had this direct effect on the soul. One might argue that the power of classical works to evoke such experiences appears to be heightened in many purely instrumental works despite the fact that such pieces possess no readily identifiable meaning. It’s as though using composition that don’t require immediate understanding, but, rather, rely on deeper, more nuanced forms of understanding meaning can evoke more authentic, enriched experiences.

Though I was an aficionado of classical music in high school, I turned my back to it when I entered college. I mostly wanted to forget and rid myself and many negative and unwanted memories I had associated with the music in high school. Feeling as though it was part of a past that I was desperately trying to move on from, I even distanced myself from friends in high school that I enjoyed classical music with. Instead, I turned to rock, hip hop, and pop music in college, and found them just as fulfilling as I had done classical. And, though I studied physics and philosophy in tandem and I searched for meaning and beauty in any discipline I could come across during my undergraduate years, I found little reason to listen to classical music.

But that has changed.

Over the past three weeks, I’ve started listening to classical music around 1-2 hours a day. I’ve re-connected with old friends from high school with whom I shared these musical experiences, and voiced my thoughts and memories to them. It was an incredibly difficult task for me to take on at first, as I found myself washed away by intrusive thoughts and memories from my high school years. All of the experience and pain I put into myself to try to understand the meaning of Saint-Saën’s piano concertos or Ravel’s “Bolero” came flooding back. I was immediately overwhelmed and felt forced to ease the experience with frequent walks and tons of coffee. As time went on, though, the experience lessened. I became more familiar with the thoughts and emotions that took my mind hostage and have now approached a point at which listening to classical has become a routine for everything I do.

As I listened to more pieces by composers such as Shostakovich and Beethoven, I find myself more at ease with the way I perceive the world. With a refined sense of musical feeling and thought, I’ve slowly begun to lose interest in contemporary music (namely, rock, hip hop, and pop), and found myself more and more interested in the work of Wagner and Mozart. The sort of aesthetic inquiry that I could take with this music, though, required more research on my part. I wanted to understand what it meant to truly appreciate these forms of art no matter how archaic or esoteric they may seem. I’ve re-kindled the sentiments that I carried with me as a teenager playing in my high school orchestra and now seek deeper meaning behind this experience.

According to the Internet Encyclopedia of Philosophy, the three features of classical music which shape its aesthetic inquiry are music and the inner experience, the temporal aspect of classical music, and classical music as historical tradition.

“Paris Street” by Édouard Cortès As I listened to the music, I thought about what sort of inner experience I was undergoing. Classical music engages the inner experience, and we need to understand how and why this connection is so strong. This meant thinking about what exactly in my subjective experience is caused by the classical music and how I can put those things into words and thoughts. Relying on only our auditory senses causes music itself to differ from other forms fo aesthetic appreciation. To venture into these experiences inside of our own self requires defined meanings and values on what music. For me, the classical music represents a message no matter who the composer is. As a listener, I had to understand what that message was, whether it was the formation of different harmonies coming together or notes that produced a pleasurable chord. For a representation of something using music to be an object, we rely on some sort of ability within ourselves to interpret and assign meaning to these auditory perceptions. Even in the absence of what we might call musical objects we find thoughts, sentiments, and perhaps even arguments put forward by music. With classical music, this inner experience is very pronounced as it allows us to appreciate the art form for what it truly is.

We understand deeper features of classical music, such as how its temporal in the way its guided by time itself. It’s almost as though we move along with the music or that music is holding our hand as we listen. This puts music as something greater than simple idle thinking. It’s more akin to poetry or spoken word, but with a greater emphasis on rhythm, pitch, and other musical features. We listen to it just as the composer intended us to listen to it (though there are arguments that the performance is more pivotal than the composition). The relationship between the movement of music to physical motion creates an experience in which we can put forward different interpretations of classical music itself. I can then evaluate my subjective experiences of classical music against these arguments and meaning I draw from this relationship. One might even use a metaphor that music is the ocean and winds upon which we guide our ships. This response of our minds to music can be automatic, as well, in the keenest, most primal perceptions that we have about music.We can put these guidelines and rules of classical music in context. These Common Practice rules came to define the Common Practice era and much of the backbone of all of Western music. Through the ability of the composer, listeners can determine what sort of creative effort went into producing these pieces just as we would comment on any other form of art. This forms the basis of our aesthetic attitude of music and allows us to raise questions such as what sort of appreciation might be possible, where the aesthetic value comes from, how formal the value needs to be, and what sort of relationship we have with the aesthetic value. We determine these questions and ponder their solutions as we listen and even as we reflect on the way we listen. For it’s even arguable whether our experience of listening constitutes the experience of art itself. And these questions of music are so fundamental that they should reflect all of music.

As composers followed the historical tradition of the Common Practice era, they found themselves united by their feelings towards their music. However intimate they chose to be, they could put forward those emotions into their music. These compositions would be performed at ceremonies or other rituals. Perhaps it is my tendency as a lover of philosophy to look for absolute, universal truths in music. It’s difficult, though, as whatever harmony that united people in a shared language I have yet to fully understand. As I listen, though, I wonder what sort of shared feeling an audience may have when the piece is performed at a concert hall. As it was written to be played alongside everything else in society, whether it was a religious observance or a holiday, the music carries the meaning of its societal role as well.

As I researched the history, I discovered how the tones and harmonies in the music would shape the cultural era of each time period, whether it was around the time of Medieval and Renaissance thinkers like Niccolò Machiavelli and Francis Bacon in their art and science. Through this, the music truly just as important as other forms of art. I came across the paintings of Edouard Léon Cortès, a French post-impressionist artist who lived during Schoenberg’s time from the 1882 to the 1969. My curiosity lead me to understand what sort of musical background would have set the stage for Cortès’ creativity. Through my reading in the philosophy of classical music, I’ve come to know how this conception of classical music became shared across Europe, allowing artists, scientists, and writers to share the culture of different countries. Using these similar tools (like scales, rhythms, harmonies, etc.), composers could extract meaning. I’ve come to hypothesize that the diatonic scale (the set of notes used in virtually all of Western music) and other salient features of music are part of our own nature. They weren’t artificially nor arbitrarily constructed. I briefly perused through writing of composers like Wagner and philosophers like Theodor Adorno (including the latter’s beliefs that we can locate truth-content in the art object, rather than our own subjective perceptions) and have started to think that our relation with music is much deeper than just as culture would dictate. Even as time went on for the composers of the Common Practice period began using innovative techniques and expanding their uses of the tonal system. For this reason, I think philosophical truths and ideals we draw from this period should take into account the potential for music and not limit itself to only the musical pieces themselves. The philosophers of classical music aesthetics should also focus on non-tonal classical music.

From these analyses we can draw definitions of music. Deciding what is music and what is non-music could ensure that the diversity and quality of music allow the composer to control their art and message with greater precision. However, the definition itself isn’t so important for philosophers of classical music aesthetics as it would include the music composed after the classical era. On top of this definition, we

“Lankaart” by Édouard Cortès. I use the comparison between the artwork of Édouard Cortès and classical music to draw attention to the aesthetic value of the latter. The painter created his works at the height of creativity in France, and, as such, the composers of the time like the expressionist Schoenberg could have influenced his work. As I contemplate the work of painter Cortès while studying aesthetics of classical music, I wonder of the atmospheric qualities of weather of Paris that the artist captures. I also think about how our eyes perceive weather and the similarities of the sparks of paint to the glistening of sunshine and raindrops. In some of his evening compositions, the dark sky falls with delicate care and imagination, as though it were part of our interaction with nature itself. I want to conclude that these aspects of man’s interaction with nature fits perfectly with the aesthetic values of classical music that portray fundamental, natural perceptions of man. Similarly, with a musical ontology, we raise metaphysical questions of a music. Is there a “painting” we can observe that represents pieces of music? How are those values and meanings expressed through music? It seems as though someone following the teachings of Plato would believe that classical music is an abstract object. I could evaluate the painter’s works through this meaning of music.

More questions of classical music could be raised such as how much information is necessary to determine a piece of music is a performance? Does one have to perform exactly the same piece that was written by the composer or can someone’s instance of performing have its own level of difference? I believe we can understand how the fundamental rules and guidelines of music are followed to determine our notion of music and how it changes over time. Then, of course, the audience listens to music after a performer plays it. This audience can get a glimpse of the authenticity, but not the exact same authenticity that a composer originally intended. For all of of us, we view history through our own eyes and are limited by all these factors that affect music itself.And finally, one of my personal favorites by Mikhail Glinka.

The information in this blogpost is largely taken from the Internet Encyclopedia of Philosophy.

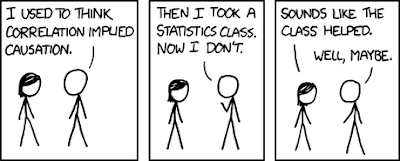

On the nature of causation and correlation: elections and cancer

Credit to Randall Munroe. With election season approaching, everyone wants to know how the future of the United States’ leadership will shape up. As we turn to data, we can make predictions through inferences of the past and present, especially as statisticians such as Nate Silver would explain. As the title would suggest, in this post I discuss under what conditions, exactly, can we use experimental data to deduce a causal relationship between two or more variables?

Scientists create randomized controlled experiments through which they can infer causal relations among different phenomena, variables, and other observations and ideas. Something like understanding how an object (in the absence of all forms of light) emits blackbody radiation requires understanding how an object were to emit forms of light in the first place. And, from that, a scientist may be able to infer that the object’s own state in the absence of light causes it to emit radiation in this way. Still, randomized controlled experiments have their own limits and caveats no matter what experiment is being performed. This leaves scientists with questions of how to infer other types of explanations and what sort of causal relationships we can truly create for a system.

Israeli-American computer scientist and philosopher Judea Pearl laid out much of the research related to theories of causality. Causal inference itself is a theory that is still debated among scientists and philosophers, and the premises, arguments, and conclusions that the theory provides can give us an understanding of correlation that doesn’t fall to errors in reasoning.

Pearl’s causal calculus is a set of three simple, powerful algebraic rules which can be used to make inferences about causal relationships. In particular, I’ll talk about the ways causal inference is possible, but I’ll also go into detail of the limits of these methods.

In explaining causes, consider the relationship of smoking and lung cancer. Several decades ago, the U.S. Surgeon General published a study that put forward the claim that cigarette smoking causes lung cancer. But the report came under attack not just by tobacco companies, but also by some of the world’s most prominent statisticians, including the great Ronald Fisher. There could have been other factors that are at play in this complicated relationship between smoking and lung cancer such as genetics, environmental factors, and even more personal characteristics such as age or race. And, in actuality, one would have to understand the relationship among lung cancer and the decision of whether an individual chooses to smoke, not the simple correlation of smoking and lung cancer itself. The actual relationship and the way these factors are correlated with one another was most likely much more nuanced than just claiming cigarette smoking causes cancer. The importance of understanding the details and specifics of these relationships would be necessary for individuals to make healthy and beneficial decisions in their lives.

A randomized controlled experiment, as briefly discussed earlier, may be possible, but the way it should be done requires explanation. A scientist may choose to perform an experiment in which they would force a person to smoke or not and, through an appropriately sized sample or smokers and non-smokers, they could observe the cancer rates among those two groups. The scientist would have to keep all other factors equal and maintain that the groups are truly random and large enough to account for an appropriate generalization that smoking causes lung cancer. The scientist could determine whether there is a causal correlation.

Yet, in reality, things don’t occur so simply. Experiments such as these are time-consuming, difficult to maintain, and rely on controlling for many factors that complicate the issue observed. This doesn’t even account for ethical or legal issues in performing such an experiment. This raises a fundamental, significant issue for scientists seeking to explore the relationships among activities like smoking and its associated health consequences.

The causal models we construct to analyze the smoking-cancer connection allow us to create diagrams that dictate there’s a hidden factor at play with both smoking and lung cancer. Mathematically speaking, the arrows dictate the relationship between how one factor causes another. Since we don’t know exactly how it behaves or what it is, we illustrate it this way:

simple and clean. There is also a third possibility: that the combination of both smoking and a hidden factor contribute to lung cancer. This makes our correlations and relationships even more complicated, but allow for more nuanced and detailed justifications of these relationships. We could perhaps develop the argument that smoking inherently may reduce the probability of lung cancer while some hidden factor increases the likelihood of cancer in a way that we observe the increase in risk of cancer. These possibilities and explanations may seem to hinge on unnecessarily complicated premises and observations, but, given how many factors are at play in the empirical evidence on the issue of smoking and cancer, they provide us with much more potential for creating accurate arguments about the issue.

For the notion of causality to make sense we need to constrain the class of graphs that can be used in a causal model. We don’t want any loops to occur in the graph. If there were loops, we wouldn’t be able to discern an appropriate causal relationship as any particular node in the loop would “cause” itself in this graph. The nature and reasons for being of one node depend on itself when we create graphs. In investigating causal relationships, we create models that we continue to change and update with more and more nodes and relationships added to the graph. We generally want to avoid methods that introduce some random indirect variable affecting every vertex of the graph. We want to have as much certainty as possible when generating the graphs.

Causal models can generally be described using their conditional probabilities, such as with the equation

. In this equation, we’re describing each probability (using p) as the product of the probabilities of the events that cause it.

Simpson’s paradox illustrates issues of grouping individuals by various factors and the trends that we observe when we choose to do so. A clear example of Simpson’s paradox can be observed with a certain study of gender bias among graduate school admissions to University of California, Berkeley. The 1973 fall data demonstrated the trend that men applying were more likely than women to be admitted. Of the 8442 men who applied, 44% were admitted while, of the 4321 women who applied, 35% were admitted. However, the admissions results of six departments were significantly biased against men, while those of four were significantly biased against women. In fact, the combined data showed a “small but statistically significant bias in favor of women.”

The paper went on to argue that that women tended to apply to departments with low rates of admission among all or most applications (such as English), while men tended to apply to the departments of the contrary (such as in engineering and chemistry).

From a purely causal point of view (in understanding which factors provide the means for determining other factors) this result seems paradoxical. Making clear, educated statements on whether an individual is likely to be accepted to Berkeley may hinge more on the assumptions and premises that lead up to our conclusions rather than seeking easily-to-digest, generalizable conclusions. Two variables which appear correlated can become anti-correlated when another factor is taken into account.

By any means, causality itself seems to fly under the radar among too many scientists. To ascertain the truth and validity of arguments of causality and use them in any sort of discipline, one must come to understand the nature of causality itself. In creating arguments and recognizing the limitations of these methods of inquiry, we can create more refined understandings of the universe and allow more certainty in our predictions and inferences.

There are ways to resolve Simpson’s paradox, though. With a causal Bayesian network (an acyclic graph as we’ve been working with, let’s say X causes Y), we can measure how changing X would change Y and determine the relationship thereof. As with our example, we have ethical and logistic reasons why this might not be possible. One could also show that an extra variable correlates with both X and Y. As in, we could determine that X causes Z which causes Y. Finally, one might have an indirect variable which affects both X and Y. Such a relationship would look like Z causes X and Z causes Y. As explained using the graphs illustrating smoking and lung cancer, we generally want our measurements to avoid these hidden variables to determine how a causal model works.

"What is art?" Turning to philosophy for answers

Livia’s Garden This post is introduces definition of what art is. I’ll introduce different theories art and consider their respective merits and pitfalls. To start we will need to have a clear idea on what we hope to achieve with a definition of art and what sort of thing that definition would need to be.

An important distinction to make is the one between nominal and real (or essential) definitions. A nominal definition defines the idea that a word stands for, while a real definition defines what it is to be what that word refers to. A real definition for X would identify a property (or set of properties) that each and every X has and that only Xs have. For example, a real definition for blue would be the light waves with wavelengths in the 450–495 nanometer range. While a nominal definition may state that blue is the color associated with the sky and the sea. To be the color blue is to be (to reflect) light in the 450–495 nm range, not to be the color of the sky or sea (which not even always blue). Now when we move our considerations from color to art, the real definition of art seems to be our true goal. Other definitions of art, like in its use as praise (“Wow, your painting of those flowers is a work of art!”) or derision (“Wow, your painting of those flowers is a work of art!”), fail to provide both sufficient and necessary properties for an artwork. That being said, there is only so much a definition can do. We should not , for example, expect it necessary for a definition to explain why a art matters or why we create it.The have been many attempts to provide a theory of art, going as far back as Plato and continuing into the current era. Some early definitions of art include:-

Art as imitation or representation.

-

Art as a medium for emotional expression.

-

Art as ‘significant form’.

These definitions all have an immediate draw, but upon closer look one can see that these views lacking. By these accounts many non-art objects would count as art (like a nicely made advertisement or a sports car) and possibly some artworks like Duchamp’s Fountain, Warhol’s Brillo Boxes , and other conceptual pieces would not count as art.Can art be defined?

With the difficulties faced in defining art with an appropriate scope, one has to question the possibility of defining art at all. In his The Role of Theory in Aesthetics, Morris Weitz argued that any real definition of art would fail because works of art are related to one another like a family rather than by some rigid set of properties. This family resemblance relationship (taken from Wittgenstein) proposes that groups given a common name and thought to connected by a common, essential feature are, rather, connected by a network of overlapping and criss-crossing features. Much like a family whose shared characteristics like: build, height, eye color, facial features, overlap and criss-cross throughout their family tree.Let’s consider Wittgenstein’s example, games, which exhibit this familial resemblance to one another. There are many kinds of games: ball games, card games, board games, and so on, that fail to be united by a ubiquitous trait. When asked to define what makes something a game one might say “It is a competition with winners and losers.” While this may work for games like chess, it doesn’t seem to work for games like catch or games with a single participant. Another may offer skill as a definition, but we can turn to them with games like rock-paper-scissors or Russian roulette. For any uniting feature offered, there will be a game that lacks this feature (or a non-game that has this feature). To know what a game is not to have a real definition of it, but be able to take new examples and being able to determine whether they are games or not. Art shares this quality of Weitz proposes an open definition of art where, upon experiencing a new art-candidate, one has to make a decision whether or not it counts on art based on its similarities to past artworks. In doing so the number of properties that one associates with art is, which accounts for the expansion of art from the fine arts to the multitude of art forms accepted today. He concludes that while theories of art fail to provide a real definition of art, they retain value as suggestions to reconsider what we consider in deciding whether something is art, and can be seen as reactionary pieces to the times.Weitz provides quite a compelling challenge to any theory of art, which combined with the challenge of placing artworks like Duchamp’s Fountain and Warhol’s Brillo Boxes, led aestheticians to definitions of art that of two major kinds: functional (being defined by what it does or is intended to do) and relational (being defined by its standing to other things). These approaches hope to avoid the issues of past definitions by focusing on non-perceptual properties of art rather than something percerptual like form.New Theories of Art

Most functional definitions of art deal aesthetic properties as being central to art’s function. A popular functionalist theory of art is Monroe Beardsley’s intentional account; he defines art as an arrangement intended to be capable of giving an aesthetic experience made valuable by its aesthetic qualities (or an arrangement that belongs to a class of arrangements generally intended to have said capability).This view seems to fall into the same traps as earlier definitions of art in that it can be said to be too wide and too narrow. The functionalist has some responses to this. To being to narrow the functinoalist can respond with a wider definition of aesthetic properties that includes non-perceptual qualities that would give conceptual piece like those of Warhol’s and Duchamp’s proper due as art. The functionalist could also double down and claim that pieces these do not constitute art, but are comments on art. In response to the functionalist account being too broad, the functionalist can dismiss things like nice cars or elegant mathematical equations using a distinction between first and secondary functions and their effects on art status.Of the relational theories of art there are two major strains, procedural (how art is given art-status) and historical (how art is related to past art).The popular proceduralist theory of art is George Dickie’s institutional theory of art, which states that an artwork is an artifact that is presented by the artist to an Artworld audience. He later revised this theory into a set of interlocking definitions: An artist is someone who knowing creates art. Art is an artifact of a kind to be presented to an Artworld public. A public is a set of persons who are prepared to (partially) understand an object that is presented to them. The Artworld is the totality of all Artworld systems. An Artworld system is a framework for the presentation of a of art by an artist to an Artworld public.It is obvious that the second argument is circular, but Dickie’s argues that it still positively represents art and the manner in which it exists. The circularity of the argument is a reflection of art’s nature. Another common objection to Dickie’s definition is that this Artworld social structure is hard to distinguish from other similar social structures, making it fail to properly distinguish art from non-art.The historical theory of art defines something as art if it stands in a certain relation to past artworks. Proposed relations have been intentional (present art has been made with the intent as being regarded in the same way as past art), functionalist (present art succeeds in performing one of the functions of an established art form), or stylistic (present art has been made in a similar style as past art).Both kinds of relational definitions have fallen to similar criticisms. For one they lack an account of the original artworks or Artworld that future art stands in relation to. Relational definitions also have a universality problem, they seem to suggest that there is one narrative of art and fail to account for art from other cultures and histories outside the traditional canon. They seem to exclude the possibility of a lone artist outside of the art-history narrative. These criticism have been meet by providing a functionalist account for the ‘original’ artworks and for artworks from independent Artworlds. Generally, there have recently been moves to hybrid theories of art, as these relational and aesthetic definitions do not seem to necessarily conflict or in some cases resemble/invoke each other.I tend to agree with Weitz’s approach to the issue, that a real, distinct definition of art cannot be made, in that art is not a concept with distinct boundaries. However, theories of art can be useful in defining what we tend to think of as art and in providing us with fresh perspectives on what art can be.Do you think either the relationalist or functionalist definitions succeed? Or has it correctly shown been that real definitions of art are impossible? Are theories of art even valuable or a waste of time?Further Reading:The Role of Theory in Aesthetics – Morris WeitzDefinitions of Art – Stephen DaviesThe Artworld – Arthur Danto

Physician Rita Charon on how stories matter to medicine

Tasks like discerning difference between modern and postmodern illness would prove difficult for anyone without appropriate training in the arts and humanities. What is and what isn’t a fact has never been obvious or uncontroversial. There was no golden age of truth. Given the present day notions of post-truth in an era of decreasing trust towards authorities, physicians and other professionals in the field of health care find themselves faced with understanding humanity’s struggles in several different points of view. As I sat in the crowded audience of the Warner Theatre in downtown Washington D.C., I was lost in thought. Staring at the paintings that physician and literary scholar Rita Charon discussed, I reflected upon their aesthetic and moral value as they related to medicine. According to Charon, the field of narrative ethics seeks to address these issues.

David Morris, a contributor to Rita Charon’s book Stories Matter, the modern perspective is “biomedical”: we are our genes, our organs, our laboratory measurements. The postmodern perspective is “biocultural”: we are made of stories. These stories have dimensions that are cultural, familial, emotional, interpersonal, psychological, and biological.Two weeks ago I had the amazing opportunity to attend Charon’s 2018 Jefferson Lecture in the Humanities “To See the Suffering: The Humanities Have What Medicine Needs.” As Charon projected Whistler’s painting “Sea And Rain 1865” before my eyes, she commented how the painting demonstrates, “the human scale of physicality, the cosmic scale of the oceans and relativity, and the existential dilemma of meaning are together in the universe and in each individual human body.” By the end of the lecture I found myself wanting to sit down and stare at paintings, read books, and spend the rest of my life in this intellectual bliss to cultivate my undergraduate passions I once had.

“Sea And Rain 1865” – James Abbott McNeill Whistler As physicians treat patients, uncover the nature disease, and set educated standards in the field of health care, they create stories. These stories, when physicians create them, become the way of “reading” as Charon describes. Physicians and medical ethicists come together and, through the notion of constructionism that we are narratively constructed, we create meaning from the world and form a deeper understanding of medicine. A diagnosis becomes less about treating a patient like they’re a biological or physiological problem and more of a human. This gives rise to the ethical dilemmas, epistemic purposes, and other issues grounded in speculation a physician would encounter. Charon herself studies this issues from the point of view of both a physician and literary scholar. Shedding light on this humanistic discourse of medicine, these narratives constructed by narrative ethicists are a modified version of postmodernism. In this sense, narratives don’t constitute persons themselves, but they are the most effective way of accessing persons. Those who learn from fiction, literature, poetry, philosophy, and the arts gain a nuanced, heavy understanding of medicine they can use in any physician’s context. Doctors who embrace these techniques get the truest, most humanistic vision into what makes a patient a patient. They can attack fundamental ideas of disease and health such that those concepts carry appropriate meaning in a 21st century world of post-truths.

The self is within the narrative. One cannot look for one without finding the other. Searching for meaning as a physician would be like creating a pattern of a fabric interwoven that only becomes clear when one takes a step back and looks at the entire picture, or declaring a line as beautiful from points of data on a graph. Charon herself notes that “the self cannot be created — or even found — independent of narrative activities.” Still, other scholars might argue that a true self is to be found if one looks close enough or reflects for long enough. Regardless, physicians should understand how similar they themselves are to patients to practice with both their own objective professionalism alongside the personal, intimate stories of a patient. With characters that have their own backgrounds, morals, beliefs, and even blood type, physicians can make the most informed decisions to adequately provide for patients.

In the packed Warner Theatre of downtown Washington, D.C., I sat on the edge of my seat. I grasped my chin as I fell deep in thought and immersed every moment of time and inch of space into Charon’s speech. Inside of me a feeling erupted. I began to see elegant patterns between both science and the art. With this robust interconnected knowledge of both sciences and the humanities, I felt as though I could transcend both disciplines, and I sat in awe at how Charon used craftiness and wisdom to weave medicine and humanities in such a way that she could engage anyone with the pure intentions of learning and making the world a better place.

Physicians have a duty to recognize the principles that govern their profession, most notably beneficence, non-maleficence, justice, and autonomy. The role these concepts play in medical ethics and bioethics issues and the exact relationship among those principles serve the basis for decisions physicians make. But the narratives and principles physicians use are often at odds with one another. Marginalized groups of people have their own voice in narrative ethics which blurs the lines between human differences while “principlist” ethics lays down ethical rules by the fundamental principles themselves. Physicians and scholars can view the world through the absolutes of principlism or the gray area of narrative ethics. Taking the narrative to the extreme, in not just the lives of physicians but placing the narrative at the heart of all knowledge, provides a fundamental in the way scientific research is performed, as well. As medical ethicists take note of how these senses of constructionism and pirnciplist ethics govern medicine, they extended these narrative techniques to the sciences as well. The narrative of the sciences takes this human form to research. As more and more physicians and medical students realize the power and value of the humanities in their work, the more humanized, mature, and ethical approaches physicians can make in whatever task they may have to do.

Debra Malina of the New England Journal of Medicine, writes “Many of the contributors to Rita Charon’s Stories Matter are major players in this narrative movement. Here, they practice what they preach, building their essays on stories of patients who want to conceal their medical conditions from their families, 60-year-old women who want to use assisted reproductive technology, parents of infants born with neurologic injuries who want to let them die — stories on whose proper endings reasonable people might disagree. The authors do agree on certain concepts — the emphasis on particulars, multiple perspectives, context, and emotional as well as rational understanding. Many stress the obligation incurred by hearing a story of suffering.”

It’s difficult to establish clear rules and guidelines for physicians developing narratives. If anything, the way to form narratives that encompass the humanistic side of medicine is more about physicians and medical students developing senses of right and wrong, aesthetics, purpose, intention, and motive in whatever they do. What procedure a physician chooses to take, especially in the details of a story such as character development and plot, may not be set in stone, but the implications and premises upon which those conclusions are reached hold a great amount of value for the meaning of that story. Techniques from literature that become essential for physicians become the actions of physicians themselves such as the way doctors obtain consent from patients or debate among themselves on ethics committees. The variation could be seen as something that makes the process all the more humane, and embracing the uncertainty provides physicians the way to understand the human condition all the more. Physicians, however, need to account for this sort of ambiguity in the work that they do.

The crises people face today, brought upon by postmodernism and post-truth dialogue, mean it’s difficult for Charon and the other contributors to Stories Matter to give single, perfect answers to the specific issues physicians face, and, instead, provide a framework to manage the relationships among physicians and patients. However detached disillusioned one may be with these limitations, addressing them in a sense based in reality gives the reader some solace and connection to the thoughts of the contributors. Some of the contributors argue that going over different perspectives that seem to contradict one another is sufficient, while others maintain physicians should return to a narrative-based approach on the principles of medical ethics. The perspectives, research, anecdotes, and reasoning by the contributors can provide physicians with a place to start when understanding their profession on a more humane level.Charon’s writing and lecture gave me a greater appreciation for the work of physicians given my background in both the sciences and the humanities. It made me all the more excited to tackle the intellectual issues of the 21st century.

The role of neural networks in machine learning

Neural networks (NN) are algorithms used to detect information and conclusions from large sets of data by recognizing underlying relationships in sets of data the same way a human brain does. They hold a tremendous amount of potential for deep learning, part of a broader family of machine learning (ML) methods based on learning data representations, as opposed to task-specific algorithms. NN and deep learning are now computationally feasible due to GPUs, it shows unbeatable power on complex prediction problems that have very high dimensionality and millions-billions of samples.

However, for smaller scale problems this approach would likely be an overkill. Designing, building a NN, tuning hyperparameters, etc., requires specific experience and skills and time. In many cases it can not be justified even by a gain in model’s performance. Besides, it is not correct to juxtapose the two, because NN are part of ML. NN are designed to solve particular classes of problems, they are not universal solvers. In some cases even ML is overkill and a statistical test could be sufficient, for example.

Let’s say that you have a problem to model in order to make accurate predictions. We always kind of have an intuitive sense of the *variables/parameters* of this model. Learning is all about fitting a set of parameters to some data such that it can reproduce the data accurately. From traditional statistics to machine learning to deep learning, that’s always what’s being done.

How many parameters do you think would be able to model such a probability? A lot? A few? It’s a trivial example, but the same applies for less trivial ones where we know the numbers of parameters is limited, e.g. predicting the maximum speed of a car (weight, engine type, fuel, etc.). For these problem, it clearly looks like statistics would be able to fit the data. It seems linear, and relying on a few parameters only. Now, you want to classify emails whether or not they’re spam. How many parameters? Some words can help defining this is spam, sure, but do you have a full list?

I can also send spam by using a very standard language, without forcing you to buy or putting many links. It looks like the model will be richer than simply a few parameters. In fact, it looks like machine learning would help to learn a few hundred parameters to really be able to model what is a spam email.

Now you want to recognize faces on pictures. How complex do you think it is? Do all eyes look the same? Mouth? Skin color? Hair shape and color? Elements like eyes, nose and mouth only make sense when they are put together in a meaningful way (unlike Picasso likes to paint them). See, it looks like while our brains do it very easily, it’s really hard to grasp what makes a face. It’s a very complex and rich model that will be needed here, able to model A LOT of potential faces to efficiently recognize them.

Therefore, deep learning is most likely to yield better results than the previous approaches. Finally, if you select an approach too big for the task, you will simply 1) overfit the data and 2) spend WAY too much time building a complex approach for no benefit, quite the contrary…

An insight into philosopher Paul Feyerabend, an imaginative maverick

Paul Feyerabend (1924-1994), having studied science at the University of Vienna, moved into philosophy for his doctoral thesis, made a name for himself both as an expositor and (later) as a critic of Karl Popper’s “critical rationalism”, and went on to become one of the twentieth century’s most famous philosophers of science. An imaginative maverick, he became a critic of philosophy of science itself, particularly of “rationalist” attempts to lay down or discover rules of scientific method.

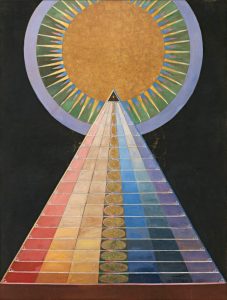

Hilma af Klint, Group X, No. 1, Altarpiece, 1915 Born to the son of a civil servant and a seamstress, Feyerabend took up reading as well as singing during his childhood. Having passed his final high school exams in March 1942, he was drafted into the Arbeitsdienst (the work service introduced by the Nazis), and sent for basic training in Pirmasens, Germany. Feyerabend opted to stay in Germany to keep out of the way of the fighting, but subsequently asked to be sent to where the fighting was, having become bored with cleaning the barracks! He even considered joining the SS, for aesthetic reasons. His unit was then posted at Quelerne en Bas, near Brest, in Brittany. Still, the events of the war did not register. In November 1942, he returned home to Vienna, but left before Christmas to join the Wehrmacht’s Pioneer Corps. Their training took place in Krems, near Vienna. Feyerabend soon volunteered for officers’ school, not because of an urge for leadership, but out of a wish to survive, his intention being to use officers’ school as a way to avoid front-line fighting. The trainees were sent to Yugoslavia. In Vukovar, during July 1943, he learnt of his mother’s suicide, but was absolutely unmoved, and obviously shocked his fellow officers by displaying no feeling.

In 1945, Feyerabend was shot in the hand and in the belly during the retreat from the Russian Army. The bullet damaged his spinal nerves. Two years later, he’d return to Vienna to study history and sociology at the University until later transferring to physics. His first article, on the concept of illustration in modern physics, published. Feyerabend could be described as “a raving positivist” at the time. The student found himself persuaded him of the cogency of realism about the “external world” (Popper’s important arguments for realism came somewhat later). The considerations Hollitscher deployed were, first, that scientific research was conducted on the assumption of realism, and could not be otherwise conducted, and, second, that realism is fruitful and productive of scientific progress, whereas positivism was simply a commentary on scientific results, barren in itself.

Feyerabend received his doctorate in philosophy for his thesis on “basic statements” in 1951. He applied for a British Council scholarship to study under Wittgenstein at Cambridge, but Wittgenstein died before Feyerabend arrived in England, so Feyerabend chose Popper as his supervisor instead.In 1975, Feyerabend published his first book, Against Method, setting out “epistemological anarchism”, whose main thesis was that there is no such thing as the scientific method. Great scientists are methodological opportunists who use any moves that come to hand, even if they thereby violate canons of empiricist methodology.In his article, “How to Defend Society Against Science”, the philosopher sought to defend society and its inhabitants from all ideologies, science included. All ideologies must be seen in perspective. One must not take them too seriously. One must read them like fairytales which have lots of interesting things to say but which also contain wicked lies, or like ethical prescriptions which may be useful rules of thumb but which are deadly when followed to the letter.Now, is this not a strange and ridiculous attitude? Science, surely, was always in the forefront of the fight against authoritarianism and superstition. It is to science that we owe our increased intellectual freedom vis-a-vis religious beliefs; it is to science that we owe the liberation of mankind from ancient and rigid forms of thought. Today these forms of thought are nothing but bad dreams-and this we learned from science. Science and enlightenment are one and the same thing-even the most radical critics of society believe this. Kropotkin wants to overthrow all traditional institutions and forms of belief, with the exception of science. Ibsen criticizes the most intimate ramifications of nineteenth-century bourgeois ideology, but he leaves science untouched. Levi-Strauss has made us realize that Western Thought is not the lonely peak of human achievement it was once believed to be, but he excludes science from his relativization of ideologies. Marx and Engels were convinced that science would aid the workers in their quest for mental and social liberation. Are all these people deceived? Are they all mistaken about the role of science? Are they all the victims of a chimaera?To these questions my answer is a firm Yes and No.Now, let me explain my answer.The explanation consists of two parts, one more general, one more specific.The general explanation is simple. Any ideology that breaks the hold a comprehensive system of thought has on the minds of men contributes to the liberation of man. Any ideology that makes man question inherited beliefs is an aid to enlightenment. A truth that reigns without checks and balances is a tyrant who must be overthrown, and any falsehood that can aid us in the over throw of this tyrant is to be welcomed. It follows that seventeenth- and eighteenth-century science indeed was an instrument of liberation and enlightenment. It does not follow that science is bound to remain such an instrument. There is nothing inherent in science or in any other ideology that makes it essentially liberating. Ideologies can deteriorate and become stupid religions. Look at Marxism. And that the science of today is very different from the science of 1650 is evident at the most superficial glance.For example, consider the role science now plays in education. Scientific “facts”are taught at a very early age and in the very same manner in which religious “facts”were taught only a century ago. There is no attempt to waken the critical abilities of the pupil so that he may be able to see things in perspective. At the universities the situation is even worse, for indoctrination is here carried out in a much more systematic manner. Criticism is not entirely absent. Society, for example, and its institutions, are criticized most severely and often most unfairly and this already at the elementary school level. But science is excepted from the criticism. In society at large the judgement of the scientist is received with the same reverence as the judgement of bishops and cardinals was accepted not too long ago. The move towards “demythologization,” for example, is largely motivated by the wish to avoid any clash between Christianity and scientific ideas. If such a clash occurs, then science is certainly right and Christianity wrong. Pursue this investigation further and you will see that science has now become as oppressive as the ideologies it had once to fight. Do not be misled by the fact that today hardly anyone gets killed for joining a scientific heresy. This has nothing to do with science. It has something to do with the general quality of our civilization. Heretics in science are still made to suffer from the most severe sanctions this relatively tolerant civilization has to offer.

Wolfgang Tillmans, Philharmonie Bloch III, 2017. Is this unfair? Have I not presented the matter in a very distorted light by using tendentious and distorting terminology? Must we not describe the situation in a very different way? I have said that science has become rigid, that it has ceased to be an instrument of change and liberation, without adding that it has found the truth, or a large part thereof. Considering this additional fact we realize, so the objection goes, that the rigidity of science is not due to human will. It lies in the nature of things. For once we have discovered the truth. What else can we do but follow it?This trite reply is anything but original. It is used whenever an ideology wants to reinforce the faith of its followers. “Truth” is such a nicely neutral word. Nobody would deny that it is commendable to speak the truth and wicked to tell lies. Nobody would deny that_-and yet nobody knows what such an attitude amounts to. So it is easy to twist matters and to change allegiance to truth in one’s everyday affairs into allegiance to the Truth of an ideology which is nothing but the dogmatic defense of that ideology. And it is of course not true that we have to follow the truth. Human life is guided by many ideas. Truth is one of them. Freedom and mental independence are others. If Truth, as conceived by some ideologists, conflicts with freedom, then we have a choice. We may abandon freedom. But we may also abandon Truth. (Alternatively, we may adopt a more sophisticated idea of truth that no longer contradicts freedom; that was Hegel’s solution.) My criticism of modern science is that it inhibits freedom of thought. If the reason is that it has found the truth and now follows it, then I would say that there are better things than first finding, and then following such a monster.

Meditative thoughts on symmetry in relation to the nature of beauty

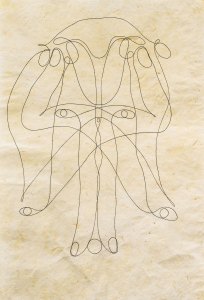

Tunga, Untitled, 2011, ink on paper, 29 7⁄8 × 20″. From the series “La voie humide,” 2011–16. In approaching the topic of symmetry (in its many forms through nature, philosophy, music, and even logic), we find many different expressions of beauty. Symmetry itself becomes a feature that almost defines beauty in the way we can craft elegant equations in mathematics and physics to our own perceptions of facial features. In symmetry, we find a similarity among all these myriad forms of beauty, and, within symmetry itself, the repetition of a feature creates a sort of rhythm that invokes aesthetic pleasure. In searching for unifying principles among several different perceptions, subjective experiences, and even more objective forms of reasoning, we can view this sort of unity as something that creates defined, certain meaning among many forms. Symmetry becomes a rhythm, like the equality on both sides of an equals sign in a mathematical equation. And, in creating these uniformities among observations, judgements, and perceptions we can deepen our senses of the world and create discoveries in science and philosophy that we couldn’t have done before. Unity would seem to be a moment’s reflection will show us that unity cannot be absolute and be a form; a form is an aggregation, it must have elements, and the manner in which the elements are combined constitutes the character of the form. A perfectly simple perception, in which there was no consciousness of the distinction and relation of parts, would not be a perception of form; it would be a sensation. This sensation is the key to understanding the relation between moral value and aesthetic pleasure that the arts and sciences invoke within us.

Beauty in all forms, as aesthetic philosophers may pronounce, invoke physiological sensations with ourselves. Knowing and determining the nature of these sensations through our appreciation of art (and other aesthetic pleasures). We create them in ways we observe everyday and in anything. A pixel on a computer screen, the beat of a percussive instrument during a song, or even a vibration that travels through space and approaches our ears create patterns as they aggregate, combine, and form with one another. Whatever bodily change or effect of a nervous process that we experience as a result of that is our bodies method of interpreting and analyzing these aesthetic forms.

Those who pay close attention to these sensations of their bodies and use that to discover new meaning, purpose, value, and other forms of wisdom can reap the benefits of these methods of reflection. But only through this close, careful introspection and reflection upon meaning and value through these aesthetic means (not only symmetry but other methods as well) can we begin to understand the nature of beauty. The form, brought upon by art and, especially symmetry, makes us more aware and sensitive to thought, ideas, principles, and means of imparting knowledge in making us human.The part of aesthetic nature we find appealing is beauty of form. In this sense, form is these objects of beauty are expressed. In aesthetic terms, the rudimentary nature of formless stimulation is removed and from the emotional looseness of remaining lost in senseless thought.Borrowing from the work of George Santayana, I believe there can be a coming together of beauty and form that a human being performs in the mind. We create inferences and insights about what we observe aesthetically and sense the unity as discussed earlier. Beyond the sensation itself and deep within the insights offered by works of art, we can detect the elements that underly beauty.